The Evolving IBM Landscape and What It Means for DataStage Users

IBM DataStage has been a cornerstone of enterprise ETL for over two decades. It powers mission-critical data integration at banks, insurers, healthcare organizations, and government agencies worldwide. But the platform’s trajectory within IBM’s broader strategy has raised important questions for organizations planning their next five years of data infrastructure.

IBM has progressively repositioned DataStage within its Cloud Pak for Data portfolio, shifting focus from standalone on-premises deployments to a cloud-integrated offering. While IBM continues to support DataStage, several trends are reshaping the landscape:

- Acquisition-driven roadmap changes: IBM’s acquisitions and divestitures have repeatedly altered the DataStage product roadmap, creating uncertainty for long-term planning.

- Cloud Pak complexity: Moving to Cloud Pak for Data introduces Kubernetes, OpenShift, and containerization complexity that many DataStage teams are not staffed to manage.

- Market momentum: The center of gravity in data engineering has shifted decisively toward Spark-based platforms, cloud-native warehouses, and open-source orchestration tools. DataStage’s market share has contracted as modern alternatives have matured.

- Community and ecosystem: The developer ecosystem around tools like dbt, Airflow, and Spark dwarfs the DataStage community, resulting in faster innovation, more integrations, and broader talent availability.

Gartner’s Data Integration Magic Quadrant has seen consistent repositioning of IBM relative to cloud-native challengers. Organizations that depend on DataStage should evaluate migration not as an emergency but as a strategic opportunity to modernize.

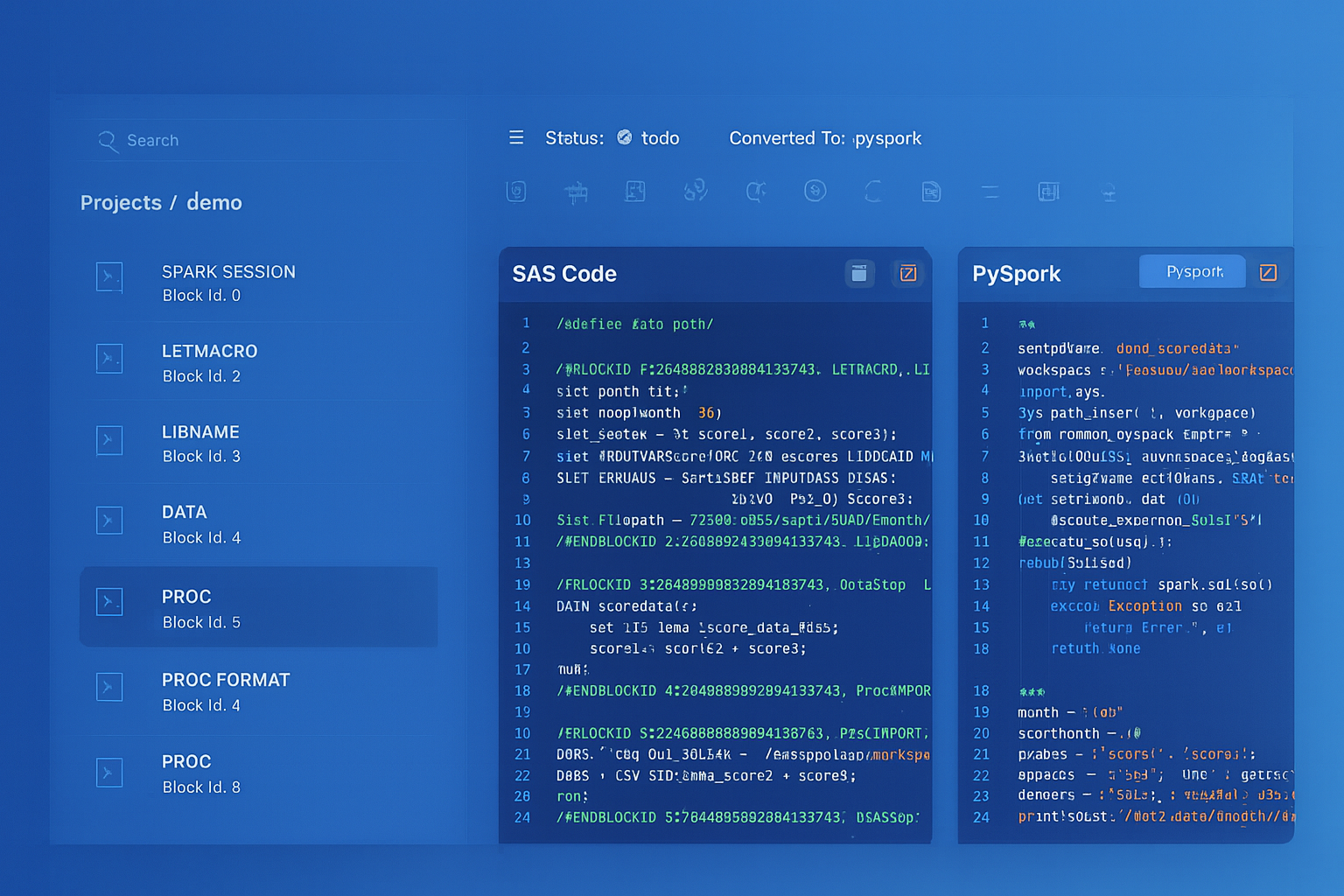

IBM DataStage to Apache PySpark migration — automated end-to-end by MigryX

Pain Points Driving Migration Decisions

Beyond strategic market concerns, DataStage users face concrete operational pain points that make migration increasingly attractive:

Licensing Costs

DataStage Enterprise Edition licensing is processor-value-unit (PVU) based, and costs escalate rapidly as data volumes grow. A typical mid-market deployment runs $500K–$1M annually in licensing alone. Cloud-native alternatives operate on consumption-based pricing that scales with actual usage rather than reserved capacity.

Mainframe and On-Premises Dependencies

Many DataStage environments are tightly coupled to mainframe data sources, on-premises databases, and legacy file systems. These dependencies create infrastructure debt that cloud migration can resolve but that must be carefully mapped before any conversion begins.

Skill Shortages

DataStage expertise is concentrated in a shrinking pool of senior developers, many approaching retirement. Recruiting new DataStage talent is increasingly difficult and expensive. Python, Spark, and SQL skills are far more abundant and less costly to acquire.

Testing and DevOps Gaps

DataStage’s testing capabilities are limited compared to modern frameworks. Unit testing individual stages, mocking data sources, and integrating with CI/CD pipelines requires significant custom tooling. Modern ETL platforms come with built-in testing, version control, and deployment automation.

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

Modern Alternatives: Where to Land

The modern ETL landscape offers several mature alternatives, each suited to different organizational profiles:

PySpark on Databricks

The most common target for DataStage migrations involving large-scale data processing. PySpark provides distributed compute, Delta Lake delivers ACID transactions, and Databricks Workflows handles orchestration. The programming model maps well to DataStage parallel jobs.

Snowpark on Snowflake

Ideal for organizations whose data already resides in Snowflake or that want to consolidate compute and storage. Snowpark Python lets you write DataFrame-style transformations that execute inside Snowflake, avoiding data movement entirely.

dbt (Data Build Tool)

Best for SQL-centric transformations. dbt converts DataStage jobs that are essentially SQL-based into version-controlled, tested, documented transformation models. It excels at ELT patterns where raw data is loaded first and transformed in-place.

Apache Airflow

While not a transformation engine itself, Airflow replaces DataStage’s Job Sequencer for orchestration. It schedules and monitors Python, Spark, or dbt jobs with dependency management, retry logic, and alerting.

| DataStage Concept | PySpark / Databricks | Snowpark / Snowflake | dbt |

|---|---|---|---|

| Parallel Job | PySpark script / notebook | Snowpark procedure | dbt model (SQL) |

| Server Job | Python script | Snowflake task | dbt run-operation |

| Stage (Transformer) | DataFrame transformation | DataFrame transformation | SQL SELECT / CTE |

| Sequence Job | Databricks Workflow / Airflow DAG | Snowflake Task DAG | dbt DAG + Airflow |

| Lookup Stage | broadcast join | JOIN with small table | SQL JOIN |

| Join / Merge Stage | join() / unionByName() | JOIN / UNION ALL | SQL JOIN / UNION |

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Key Challenges in DataStage Migration

DataStage migrations are non-trivial. The platform’s unique architecture introduces challenges that do not exist in simpler ETL tool conversions:

Parallel Job Complexity

DataStage parallel jobs operate on a pipeline-parallel model where data streams through stages concurrently. The execution graph is compiled into an OSH (Orchestrate Shell) script that manages inter-process communication, buffering, and partitioning. Reproducing this behavior in PySpark requires understanding how DataStage partitions data across processing nodes and ensuring that the Spark equivalent achieves the same data distribution.

Server Routines and BASIC Transforms

Server jobs use a BASIC-derived language (DataStage BASIC) for custom transformations. These routines must be translated to Python, which usually involves parsing BASIC syntax for string manipulation, date arithmetic, and conditional logic that has no direct Spark equivalent.

Hash Partitioning and Sort Stages

DataStage gives explicit control over data partitioning with hash, modulus, range, and round-robin partitioners. Spark’s partitioning model is different—it uses hash partitioning by default but with different hash functions. Migration must account for these differences, particularly in jobs where partitioning affects aggregation or join correctness.

Metadata and Data Lineage

DataStage stores rich metadata in its repository, including column-level lineage, data types, and transformation logic. Extracting and preserving this metadata during migration is essential for governance and audit compliance. Losing lineage visibility during migration can create regulatory risk in financial services and healthcare.

MigryX: Automated DataStage Migration

MigryX reads DataStage job definitions and generates equivalent code with full fidelity across all job types and stage configurations.

Building a Migration Strategy

A successful DataStage migration follows a disciplined strategy that balances speed with risk management:

- Inventory and classify: Export your DataStage repository and catalog every job by type (parallel, server, sequence), complexity, execution frequency, and business criticality. Retire unused jobs before investing in their conversion.

- Choose your target wisely: Not every job needs to land on the same platform. SQL-heavy transformations may fit dbt, while compute-intensive processing may suit PySpark. A multi-target strategy often yields the best results.

- Automate the repeatable: Tier 1 and Tier 2 jobs—those with standard stages and no custom BASIC routines—should be converted automatically. Reserve manual effort for Tier 3 complexity where custom logic, edge-case partitioning, or mainframe connectivity require expert judgment.

- Validate relentlessly: Parallel-run the DataStage job and its converted equivalent against identical input data. Compare outputs at the row, column, and aggregate level. Automate this validation so it can be repeated with every code change.

- Decommission progressively: Retire DataStage jobs one sequence at a time, not all at once. Each decommission should be a planned event with rollback procedures in place.

Organizations that adopt automated conversion tools typically dramatically reduce migration timelines compared to manual rewriting, while achieving higher consistency and lower defect rates in converted code.

Why Now Is the Right Time

Three converging factors make 2026 the optimal window for DataStage migration:

- Cloud platforms are mature: Databricks, Snowflake, and the major cloud providers offer production-grade ETL infrastructure that did not exist five years ago. The risk of moving to these platforms has dropped dramatically.

- Automation tooling has arrived: Platforms like MigryX can parse DataStage exports, generate target code, and validate conversions at a level of automation that was previously impossible. The economics of migration have fundamentally changed.

- Talent pressure is accelerating: Every year, the pool of available DataStage expertise shrinks while the cost of retaining it grows. Migrating now, while institutional knowledge still exists within your organization, is significantly easier than migrating after key personnel have departed.

The question is not whether to migrate from DataStage but when—and the evidence increasingly points to now.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo